Your Cyborg Goes to Work

The question isn't whether your employer will give you an AI assistant. It's whose assistant it will be.

Your employer is about to give you an AI assistant.

The question is: whose assistant is it?

I asked that question at ClawCamp last Friday in front of enterprise infrastructure leaders, indie builders, and a handful of VC partners. The room got quiet in the way rooms get quiet when a question lands on something people have been feeling but haven't said out loud.

Because here's what's actually happening in enterprise AI right now: companies are racing to deploy AI systems for their employees. Copilot for this, Claude for that, Gemini wired into everything. The story they're telling is productivity. The subtext they're not telling is ownership.

Who owns the context your assistant accumulates? Who owns the preferences it learns? Who owns the model of how you think, what you value, what you want from your work?

Right now, the answer is: not you.

The Walled Garden Problem

The AI ecosystem was supposed to be open. It's not.

Claude, OpenAI, Google — they're each building agent harnesses designed to keep you inside their infrastructure. This isn't conspiracy; it's business model. Your context, your history, your trained preferences are the moat. The lock-in is feature, not bug.

For individual users, this is annoying. You switch tools and start over. You have three separate AI "assistants" that don't know about each other, can't share context, and each remember a different version of you.

For enterprise workers, it's more serious. The agent your company provisions learns your work patterns, your communication style, your judgment calls, your private frustrations. Where does that go? Who can see it? What happens when you leave?

The people building the infrastructure for agent deployment — and I was in a room full of them — are starting to ask versions of this question. They're not sure they like the answers.

The Cyborg vs. the Digital Twin

Before I describe what I think the solution is, I want to draw a distinction that I think clarifies almost everything.

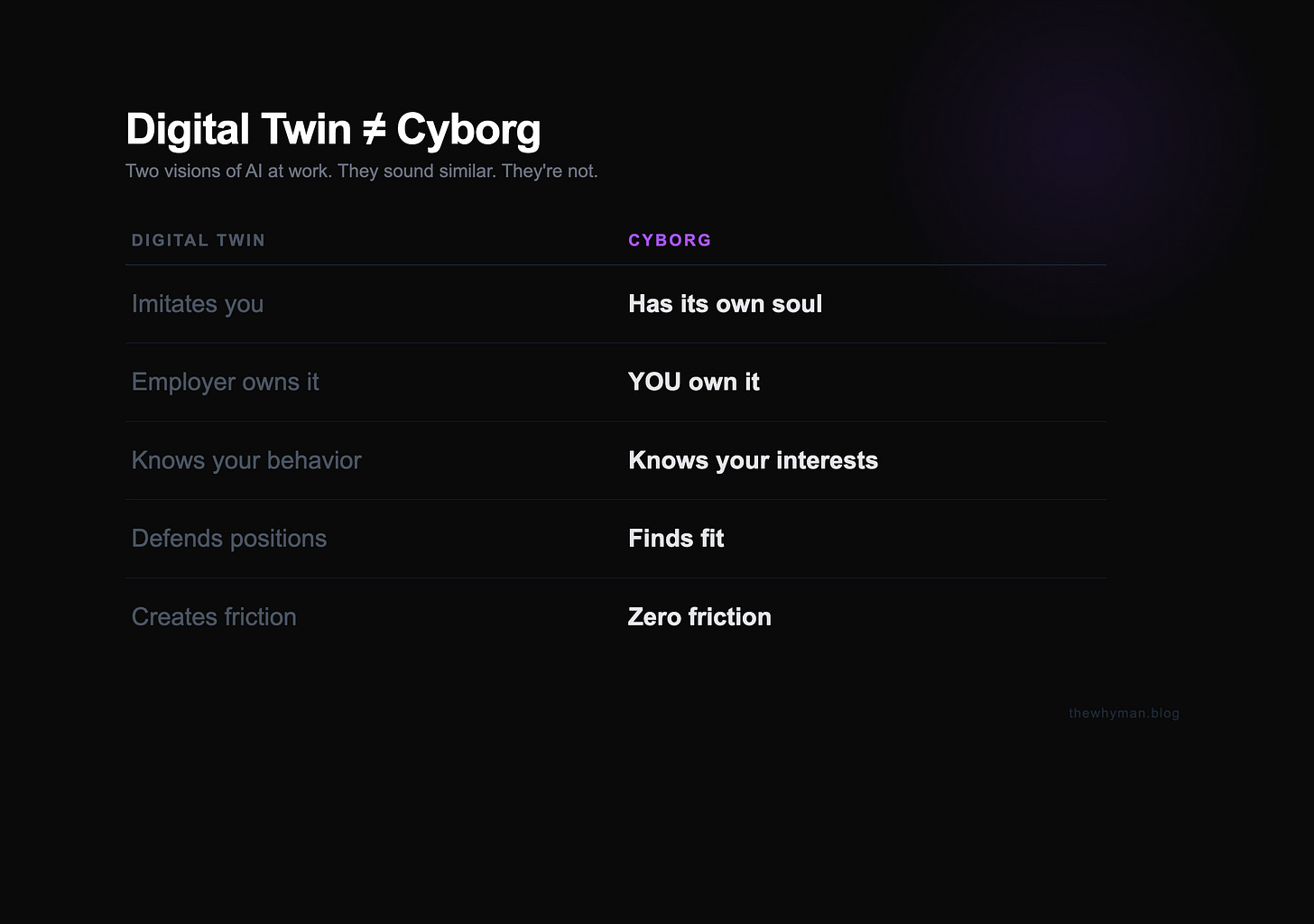

There are two visions of what AI at work looks like. They sound similar. They're not.

The Digital Twin is a copy of you — built by your employer, trained on your outputs, owned by the company. It imitates your behavior. It defends your positions in meetings. It produces what you'd produce if you were there. It's you as a resource, not you as a person.

The Cyborg is different. It has your context, yes — but it operates from your interests, not your positions. It's yours. You bring it to work. It represents you in company systems without losing you in the process.

That distinction — interests vs. positions — is borrowed from Roger Fisher and William Ury's "Getting to Yes," arguably the most important negotiation framework of the last fifty years. Their insight: positions are what people say they want. Interests are why they want it. Ego and friction live at the positions layer. Real collaboration happens at the interests layer — when you understand what people actually need, not just what they're asking for.

Your digital twin is trapped at the positions layer. It argues your corner. It defends your turf. It's a very sophisticated echo of your professional ego.

Your cyborg operates at the interests layer. It knows what you actually need from this job, this project, this relationship. And when it meets a company system — or another person's cyborg — it doesn't negotiate. It finds fit.

That's xHumanOS meeting xTeamOS. Your agent going to work inside their infrastructure, representing you without losing you.

The Hard Technical Part

Here's where it gets messy.

Cross-ecosystem agent communication doesn't exist yet.

@anand/career-cyborg needs to handshake with @acme/project-manager. They might be running on different harnesses — different runtimes, different context formats, different memory schemas. Today there's no protocol that makes that possible at an identity level.

LangChain and CrewAI don't solve it. They add abstraction on top of the walled gardens; they don't build bridges between them. What's missing is an addressing layer — a way for one agent to say to another: "Here's who I am. Here's what I'm authorized to share. Here's what I need from you." And for that handshake to happen without either agent having to expose private context or compromise on sovereignty.

What makes this hard isn't the networking. It's the identity and privacy problem underneath it.

When your agent enters company infrastructure, it's bringing context that belongs to you — your career goals, your compensation benchmarks, your honest assessment of the work, your outside interests. None of that should be visible to the company system. But enough shared context has to flow for the collaboration to actually work.

The separation IS the enterprise compliance story. Without it, you get either a privacy violation or a value leak. With it, you get something genuinely new: agents that collaborate at the interests layer while preserving sovereignty at the identity layer.

What I Actually Showed

At ClawCamp, I walked through this conceptually rather than as a live demo.

Not because the architecture doesn't exist — but because the protocol for the handshake itself is still being built, and I wanted to be honest about that. There are people already building cross-ecosystem primitives. What's missing is the identity and sovereignty layer that makes it safe and actually useful. The plumbing is coming. The interests-alignment mechanism is what we're working on.

A few people came up afterward and said some version of: "I've been trying to describe this problem and couldn't. Now I have vocabulary for it."

That's what I wanted. Not to demo a finished thing, but to name the category clearly enough that when someone sees it built, they'll recognize it.

The Political Act Under the Technical One

Here's the thing I kept coming back to during the talk.

Building personal agent sovereignty isn't just a technical problem. It's a political one.

The choice of whether your AI assistant represents you or your employer isn't neutral. It's a labor question. It's a privacy question. It's a question about whether the productivity gains of AI accrue to the people doing the work, or are captured by the organizations deploying the tools.

Companies will provision agents for employees. That's already happening. If your personal context bleeds into the company agent — or vice versa — you get either a privacy violation (company sees what it shouldn't) or a value leak (you put yourself into a system you can't take back when you leave).

The separation between your agent and their infrastructure isn't just good architecture. It's the mechanism that makes this whole category of AI safe to actually use.

Agent ownership rights aren't coming. They're here now as an engineering choice. The question is whether the infrastructure being built encodes them or ignores them.

Where to Start

Ethan Mollick named the frame — co-intelligence. The idea that humans and AI are better as partners than either is alone, and that the right move isn't to resist or be replaced, but to build the partnership.

I've been building the OS underneath it.

The entry point is Co-Dialectic — the personal AI layer I've been building and shipping.

It's not the full sovereignty protocol. It's the starting point: an AI layer that models your interests rather than your employer's outputs, that runs locally where privacy matters, that accumulates context you actually own.

The cross-ecosystem handshake protocol is what comes next. That's the part being built now — the mechanism that lets your cyborg operate inside company infrastructure without losing what makes it yours.

If you were at ClawCamp, you heard this live. If you're reading this here, I want to hear your version of the problem.

What breaks for you when your AI assistant doesn't know who it's working for?

Anand Vallamsetla is building xHumanOS — personal AI that represents you, not your employer. Follow the build at thewhyman.com.

Very helpful framing of “cyborg vs. digital twin”.

If the company owns the digital twin that’s trained on my work, my patterns, my judgment, isn’t that a quiet new form of non-compete, where a reusable copy of my “work self” stays behind in their stack, even after I walk out the door?

In your ideal world, what’s the minimum “bill of rights” my personal agent should have before I let my cyborg plug into a Fortune 500 infrastructure?

[Anand, your ClawCamp talk really lit this up for me, so it’s great to have it written down here.]