Most teams are using a top-tier model through an untouched conversation.

Vague prompt in. Confident answer out. Nobody checks the citation. The decision ships. A week later, a stat is wrong, a name is misattributed, a “best practice” turns out to be hallucinated, and the trust the model spent months earning evaporates in one Slack thread.

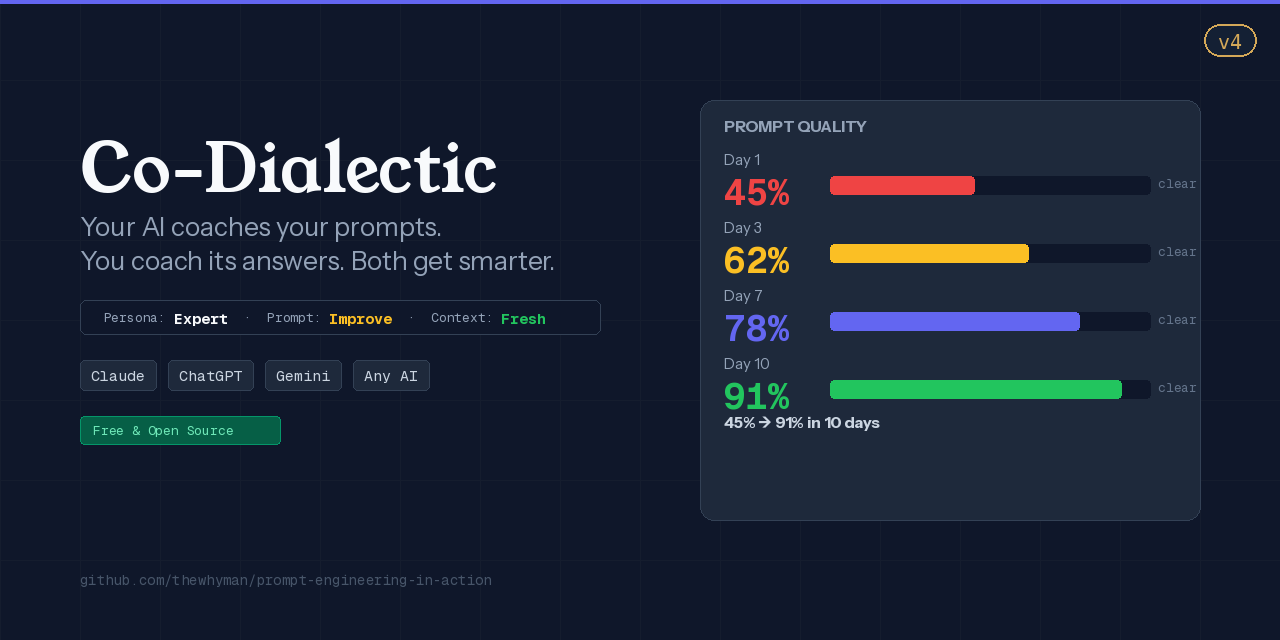

That gap — between the model’s capability and the conversation surrounding it — is where LLM ROI quietly leaks. **Co-Dialectic v4 is the layer that closes it: cross-family verification underneath every message, mean cost around $0.006 per checked artifact.**

## What Co-Dialectic is, in one sentence

Co-Dialectic is a universal AI conversation layer that sharpens your prompt on the way in, runs cross-family verification on the way out, and catches hallucinations and sycophancy before either reaches you — at near-zero marginal cost.

It is not another model. It sits *beneath* whichever model you already pay for (Claude, GPT, Gemini) and raises the floor of every interaction.

## The strongest claim — cross-family judge cascade

Most LLM verification asks the same model family to grade itself. Same training distribution, same RLHF gradient, same blind spots; the verifier rubber-stamps what the author would have said. Co-Dialectic’s cascade routes every high-stakes artifact through small judges from at least two different model families — Anthropic and OpenAI, OpenAI and Google, whatever you wire up — and only escalates to an expensive tiebreaker when the cheap panel disagrees.

Cost: a realistic stack of Haiku 4.5 + GPT-5.4-mini lands around **~$0.006 per checked artifact**; cheaper-tier (4o-mini + Gemini Flash-Lite) lands under a cent. That’s **10× to 30× cheaper than a naive parallel premium jury** (Opus + GPT-5.4 + Gemini 2.5 Pro running in lockstep), and it catches a strictly bigger class of failures because the disagreement *is* the signal.

This is the primitive no open-source *conversation layer* ships today — LLM eval frameworks exist; a drop-in per-conversation cross-family verifier doesn’t.

## Why v4 matters

Three things actually shipped in v4:

**1. Research-first mode.** Co-Dialectic spawns research sub-agents *before* asking you to act on a question. The default flips: cheap parallel research, then human judgment — instead of human-judgment-first, research-when-forced. Toggle with `co-dialectic research on/off`.

**2. Handoff codification.** At session end, Co-Dialectic scans the conversation for unfinished items, decisions, and lessons; emits structured JSON; the workspace adapter persists where it belongs (GitHub issues, handoff doc, whatever the workspace defines). No more re-explaining unfinished work to the next session.

**3. Cascade routing.** Verification primitives — prompt rewrite, persona detection, sycophancy scan, hallucination pre-flight — route to the smallest model that can handle the job: Haiku 4.5, GPT-5.4-mini, Gemini 2.5 Flash, plus local DeepSeek-R1-Distill-Qwen-7B / Ministral 3 / Phi-4-mini when an Ollama runtime is wired up. The expensive model only fires when stakes warrant it.

The architecture these features compose toward — **bidirectional standalone** (Co-Dialectic works alone, xOS works alone, either composes when both are present) and the cross-family judge cascade above — that’s the strategic frame v4 is filling out, not a single-version delivery.

## The four primitives, explained

**Prompt sharpening.** You type “help me write a launch announcement.” Co-Dialectic rewrites it to: “draft a 250-word launch post for technical leaders, lead with the pain of confidently-wrong LLM output, end with one concrete CTA — open question or specific link?” You accept, edit, or reject before it ships. The sharpened prompt is the product. The model just executes it well.

**Persona system.** Co-Dialectic auto-detects domain — code, product, design, career, writing, debugging, positioning, data, mindset, productivity — and applies a top-0.001%-caliber lens. Jeff Dean-caliber for systems architecture. Shreyas Doshi-caliber for product strategy. Jony Ive-caliber for design. Reid Hoffman-caliber for career strategy. George Orwell-caliber for prose. Linus Torvalds-caliber for debugging. Steve Jobs-caliber for positioning. Nate Silver-caliber for data. Tim Storey-caliber for mindset. Tim Ferriss-caliber for productivity systems. Multi-domain tasks get fused lenses automatically. The product never speaks as the named person, never claims their endorsement, never trains on their work — *caliber* is a comparative quality standard, the way “Hemingway-caliber prose” describes the bar, not the author. Personas are lenses, not delegates; the output is still yours.

**Hallucination detector.** Two stages. Pre-flight: classifies risk surface (factual / quantitative / citation / temporal / proprietary) and conditionally requires grounding sources before the prompt ships. Post-flight: scores every claim against canonical-source criteria and flags high-risk claims before you act. This is the primitive that catches the failure mode that destroys reputations — the confident citation that doesn’t exist.

**Calibration auditor.** Passive scanner across every Co-Dialectic-mediated response. Flags sycophancy markers — “Great question!”, “You’re absolutely right!”, “Excellent insight!” — and engagement-maximizing filler. Flattery degrades your critical filter. The auditor surfaces flagged content as a one-line summary before the response renders. Anti-bubble by construction.

## Three users it’s built for

**Solo professionals.** Consultants, analysts, founders, writers, lawyers, researchers. You already pay for one LLM subscription. Co-Dialectic makes that subscription land more reliable answers on the same daily volume — every prompt sharpened on the way in, every response risk-scored on the way out. You ship fewer wrong answers; your clients trust you more.

**Engineering teams shipping LLMs in production.** Copilots, agents, RAG pipelines, classifiers — anything where a verification layer must be open-source, vendor-neutral, and not couple your reliability story to one provider’s eval infrastructure. Co-Dialectic drops into any pipeline; the cross-family cascade catches what single-vendor verification misses at a fraction of full-jury cost.

**High-stakes researchers and advisors.** Investment analysts, policy researchers, medical reviewers, legal advisors, academic researchers. One bad source past the LLM’s confidence layer poisons a memo. Co-Dialectic’s hallucination detector and calibration auditor are the two highest-leverage primitives in this segment.

## Cost discipline (because this is the question I always get)

- Cross-family review with a **flagship-cheap stack** (Haiku 4.5 + GPT-5.4-mini): **~$0.006–$0.008 mean per artifact** at typical sizes. Drop to **prompt-cached + cheaper tier** (4o-mini + Gemini Flash-Lite): **under a cent.**

- Versus a naive parallel premium jury (Opus + GPT-5.4 + Gemini 2.5 Pro): **~10×–30× cheaper depending on stack choice.**

- Local fish-school primitives (rewrite, persona, sycophancy scan, hallucination pre-flight) run on whichever models you have available via Ollama; near-zero marginal cost when wired up, cheap-API fallback when not.

- The expensive model only fires when the cheap cascade disagrees and a tiebreaker is genuinely needed.

If you’re spending more than a couple cents per verified artifact, Co-Dialectic will make the bill smaller, not larger.

## What’s not in v4 yet (intentional)

- A hosted backend. There isn’t one. There won’t be one in the open-source tier.

- A vendor account. Co-Dialectic runs against your existing subscriptions. We never see your prompts.

- Feature gating against the open-source tier. Every primitive listed above ships in AGPL-3.0. The premium tier (xOS plug-in family) adds capabilities for T3-T4 stakes; it does not unlock existing ones.

## How to try it

Install instructions live in the project README (link in the comments). Open any session, type any vague prompt, and notice the difference in the same conversation.

## The deeper bet

The highest-leverage intervention in AI-assisted work is not a better model. It’s a better conversational substrate. Co-Dialectic is that substrate. v4 is the version where it composes cleanly with everything you already use, runs at sub-cent verification cost, and ships open-source without compromise.

If your team is paying for the model and getting half the answer, the gap is the conversation. Close it.

---

*Co-Dialectic v4 is open-source under AGPL-3.0. Repository, docs, and the issue tracker live in the comments.*

*If you ship LLM-powered work to anyone who matters, I’d love to hear what catches in your first session — drop a note.